Treasury Tokenization Enters Benchmark Infrastructure

Treasury Tokenization is less about digitizing assets and more about redefining where financial systems take their reference from. Markets do not operate on instruments alone, they operate on shared benchmarks that coordinate pricing, risk, and collateral decisions across participants. When that reference layer begins to move onchain, it signals that institutions are adjusting the infrastructure beneath the market rather than the market itself. The shift is subtle, but it changes how information flows, which ultimately shapes how capital behaves.

Treasury Tokenization Through S&P and Kaiko Collaboration

The implementation was carried out with Kaiko, which provides the infrastructure required to deliver the index onchain. The iBoxx US Treasuries Index tracks US government bonds across multiple maturities and is widely used in institutional fixed-income products. Instead of distributing this data through traditional feeds, the index is now accessible directly within a blockchain environment.

This matters because benchmark data is not just informational, it drives execution. Pricing models, collateral decisions, and portfolio construction rely on consistent index inputs. By embedding the index into a blockchain system, institutions can integrate these inputs directly into applications without relying on separate data vendors or delayed synchronization processes.

The tokenized index is not investable. Its function is closer to a data primitive, something that other financial products can reference. Treasury Tokenization here is not about creating a new asset class but about restructuring how existing assets are referenced and integrated.

Treasury Tokenization and Controlled Access

Control has not been removed, it has been redesigned. S&P Dow Jones Indices retains authority over who can access and use the index, with permissions embedded at the token level. This ensures that licensing models remain intact while still allowing the data to exist within a shared infrastructure.

Kaiko enables the issuance and distribution of this tokenized index without altering the integrity of the underlying data. This is important because benchmark credibility depends on consistency and trust. Any variation in methodology or delivery would reduce its usefulness.

Institutions are not moving toward open systems by default. They are building environments where data can be shared without losing control over distribution. Treasury Tokenization, in this structure, becomes a coordination tool rather than a decentralization experiment.

Why Treasury Tokenization Starts With US Treasurys

US Treasury bonds sit at the center of global financial systems. They are used as collateral, pricing references, and risk benchmarks across multiple markets. Starting with a treasury index is not about convenience, it is about anchoring blockchain systems to an asset class that already carries institutional trust.

S&P and Kaiko described Treasurys as a base layer for onchain finance. This reflects their role in collateral frameworks, where stability and liquidity are more important than yield. If a system is going to operate onchain, it needs a reliable unit of reference. Treasury benchmarks provide that stability.

Putting the index onchain changes how institutions access it. Instead of relying on external data feeds, the benchmark becomes part of the same environment where financial products are created and managed. This reduces latency between data availability and execution decisions, which becomes more relevant as systems become more automated.

Treasury Tokenization and Market Scale

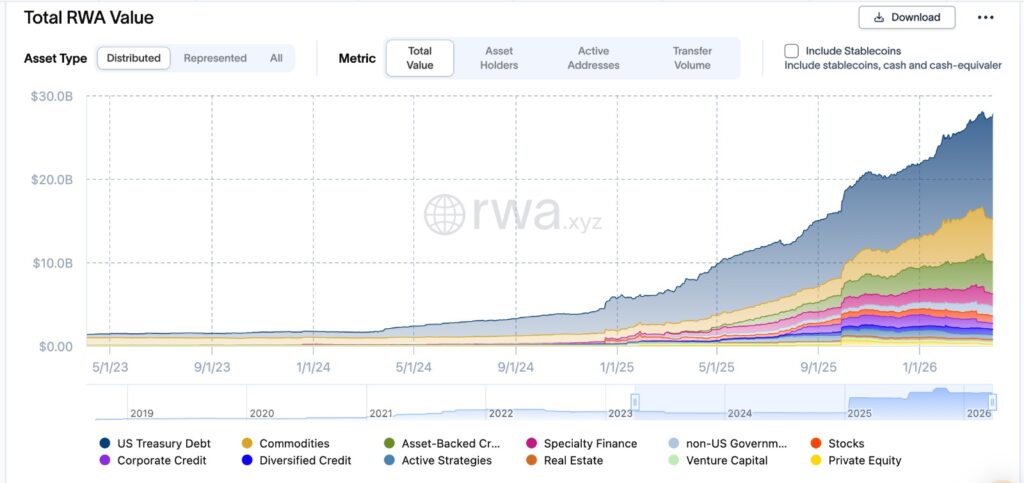

Treasury products already dominate tokenized markets. More than $12.5 billion worth of Treasurys have been brought onchain, forming the largest segment within a broader tokenized asset market of around $27 billion. This concentration is not accidental.

This chart shows how tokenized real-world assets have expanded over time, with US Treasury exposure forming the largest share of the market. The concentration is not random. Capital tends to anchor itself in instruments that already function as collateral across systems, and Treasurys fulfill that role more consistently than other asset classes. What stands out is not just the growth, but where that growth is concentrated, suggesting that adoption is being driven by structural utility rather than experimentation.

Institutions prioritize assets that minimize uncertainty. Treasurys provide liquidity, regulatory clarity, and predictable behavior under stress conditions. These characteristics make them suitable for early-stage infrastructure changes. Treasury Tokenization builds around what institutions already trust rather than introducing unfamiliar risk.

Price does not establish trust in these markets. Trust determines whether price can function at scale. That is why tokenization efforts are centered on assets that already serve as financial anchors.

Canton Network’s Role in Treasury Tokenization

The Canton Network is designed to support institutional requirements, with more than 600 participating institutions and validators. Backed by firms such as Goldman Sachs and Citadel, it operates as a public blockchain with built-in considerations for privacy and compliance.

Its structure allows participants to interact within a shared system while maintaining control over sensitive data. This is essential for financial institutions that cannot operate in fully transparent environments. The network is not replacing existing systems but attempting to connect them in a way that reduces fragmentation.

Integrating the iBoxx US Treasuries Index into this network tests whether benchmark data can function reliably within such an environment. The challenge is not technical deployment, it is maintaining consistency across participants who depend on identical data inputs.

What Treasury Tokenization Signals for Financial Systems

Bringing a treasury index onchain changes how financial systems handle reference data. Instead of pulling data from external providers, institutions can interact with benchmarks directly within the same infrastructure where transactions occur. This reduces the need for reconciliation between systems, which is often a source of delay and error.

The model can extend to other indices, allowing more components of financial markets to operate within unified environments. As more data moves onchain, the distinction between data and execution begins to narrow.

Capital does not respond to novelty, it responds to usability. Treasury Tokenization at the benchmark level suggests that institutions are focusing on making systems operationally efficient rather than conceptually different.

Editor’s View:

Institutions tend to move infrastructure first and products later because the risk is easier to contain at that level. A benchmark going onchain does not change market exposure, but it changes how that exposure is coordinated behind the scenes. This allows firms to test new systems without altering client-facing outcomes, which is often where resistance appears. What looks like a small technical shift is often a controlled way of preparing the system for larger changes that are not yet visible.

Disclaimer: This content is for informational purposes only and does not constitute financial advice.

Keep yourself updated with the latest crypto news with FYI Gazette

Read more about Memecoins with FYI Gazette

Keep yourself updated with the latest Altcoin News with FYI Gazette

Bitcoin

Bitcoin  Ethereum

Ethereum  Tether

Tether  XRP

XRP  Solana

Solana  TRON

TRON  Dogecoin

Dogecoin  Litecoin

Litecoin  Pepe

Pepe